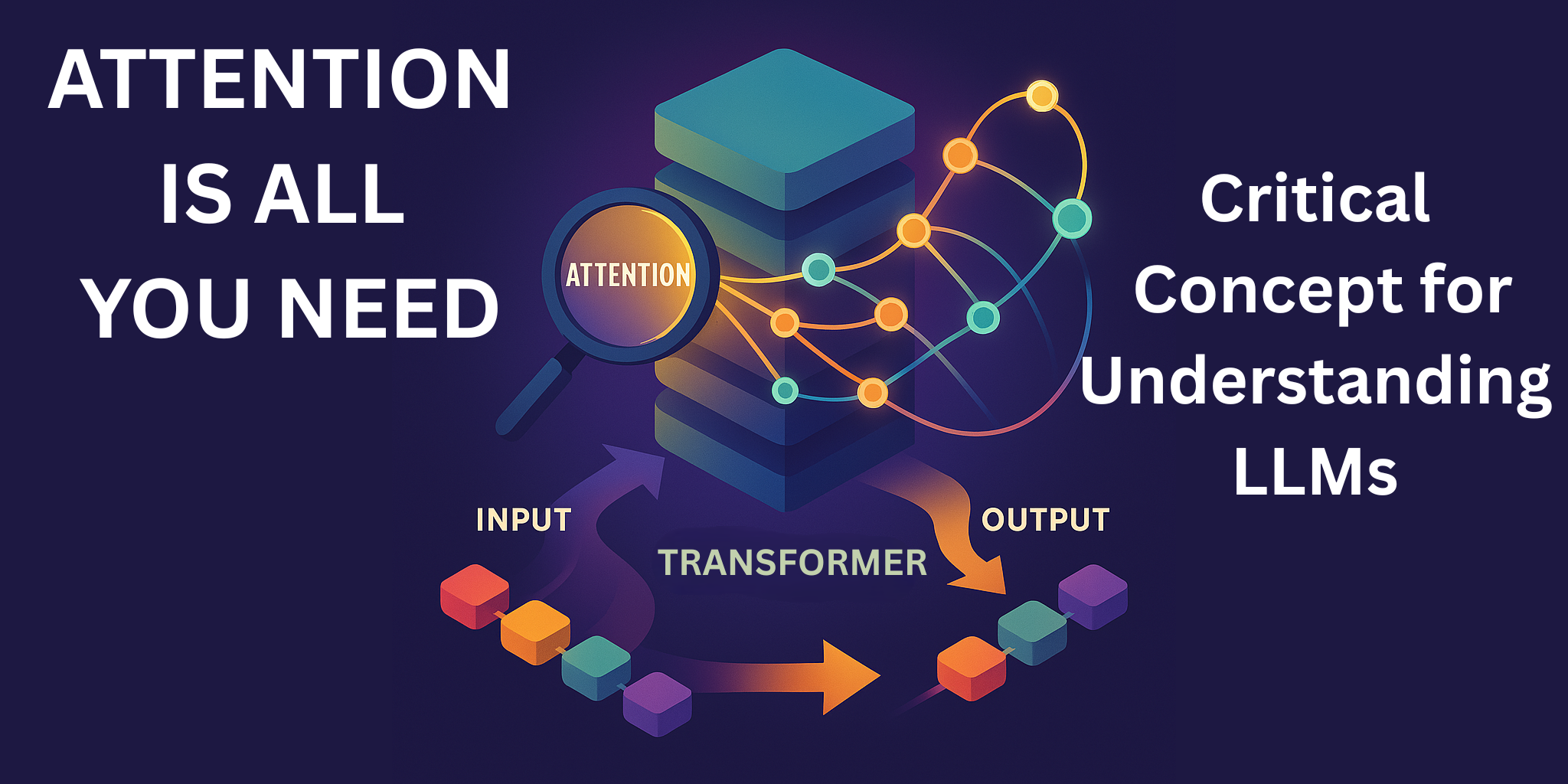

Attention is All You Need: The Magic Behind Modern AI Language Models

The “Attention is All You Need” paper introduced the Transformer architecture, revolutionizing AI language processing by enabling simultaneous analysis of text. With its encoder-decoder structure and attention mechanisms, Transformers enhance context understanding, nuance detection, and clarity in ambiguity, impacting various applications, including translation and everyday AI interactions, improving user experience in communication.